Looking for statistical significance in rent contracts

My job requires me to look at a bunch of rent contracts. The other day I was analyzing a list of them that looked something like this (I recreated the table so as to not disclose any sensitive information, but this is not relevant to the point I’ll make):

The first question I asked myself was: what is the sorting criterium used here? This is, what determines the order in which the contracts are being shown in this list?

I encourage you to stop now and try to figure it out for yourself.

My first guess, as I bet was yours if you paid enough attention, was that they were sorted by End Date, from earlier to later. But I was not sure. How could I know that the contracts were indeed being sorted by End Date and that this was not just a fortuitous coincidence?

I used a little bit of high school math to calculate the probability that these contracts would end in this precise order by chance. If they had been assigned randomly, the probability that Anna’s contract would be the first one was 1 in 5, since there were five contracts in total. For the second position, the probability was 1 in 4; then 1 in 3, and so on. So the overall probability that the contracts would end exactly in this order by chance is 1 in 5 * 4 * 3 * 2 *1, or 1 in 120. This equates to a chance of 0.83%. This seems pretty low. Since they did end up in that position, and the chances of that having happened randomly were so low, it sounds like it was not random, and that End Date was indeed the sorting criterium.

In academic research, this probability that you could get, by accident, results as good as the ones you got is called the p-value. For instance, if some medical treatment is shown to make patients feel better and there was only a 1 in 20 chance that this would have happened just out of luck, you say that the treatment works with a p-value of 5%. As a rule of thumb, anything below 5% is considered pretty good, gets called “statistically significant” and can get you published in a prestigious journal.

Ok, so I had a p-value of less than 1% for my End Date hypothesis, so we can confidently say this was the sorting rule rather than merely an accident, so we can pat ourselves on the back, and go home, right? Right???

Weeeeell, not really.

If you think about it, there’s nothing special about the End Date column in particular. If in the process of trying to figure out how the lines were sorted I had observed that the column Building was alphabetically ordered, I would be pretty happy too. I would have performed all the same calculations, would have arrived at the same p-value of 0.83%, and would have asserted that Building was definitely the right criterium, as the chances of having found that by chance were much less than 1 in 20. As a matter of fact, any particular order would have a p-value of 0.83%, even if they made no sense and therefore did not make me happy.

What is happening here is that I did not choose one specific column to be adequately ordered in advance: I looked at a bunch of them and found one that was. Yes, chances below 5% are rare, but if you look for them enough times, you will eventually find them. Being dealt a straight flush is pretty rare, but if you are always playing poker, it will happen once in a while.

Ok, so what can we do about this? If you think about it, the question I really want to answer is not how improbable it was that the End Date column would end up in that particular order, but that I would have found any pattern that would have made me happy. As I had five columns, there were 5 different line orders, among 120 possible ones, that would have made me claim “Aha! I found the pattern.”

A 5 in 120 chance equates to a probability of 4.17%. So it still makes the p-value cut and is statistically significant. So yeah, now I can confidently claim the End Date pattern was no accident… right?

Well, there is still one more thing we need to consider. There are always at least two ways in which a column can be ordered. End Date, for instance, can go from earlier to later or from later to earlier. Tenant Name can be in alphabetical order or reverse alphabetical order. And so on.

So my real p-value is actually two times that, or… 8.33%, which, it hurts me to say, is higher than 5% and therefore statistically insignificant.

(In case you are wondering, I just kept doing these calculations out of curiosity, because the best way to know the sorting rule is to just look at the algorithm, which in this case was… random. At least the math checks out.)

Another way I could have avoided this problem would be by using what statisticians call the Bonferroni correction, which consists of making your p-value threshold more demanding by dividing it by the number of things you are measuring. In this case, instead of accepting anything below 5%, we would have had to divide that number by 10, since there are 10 possible orders that would have shown a pattern (two for each of the five columns). And as my 0.83% p-value at the beginning would have been greater than 5%/10, or 0.5%, my hypothesis would have been found statistically insignificant.

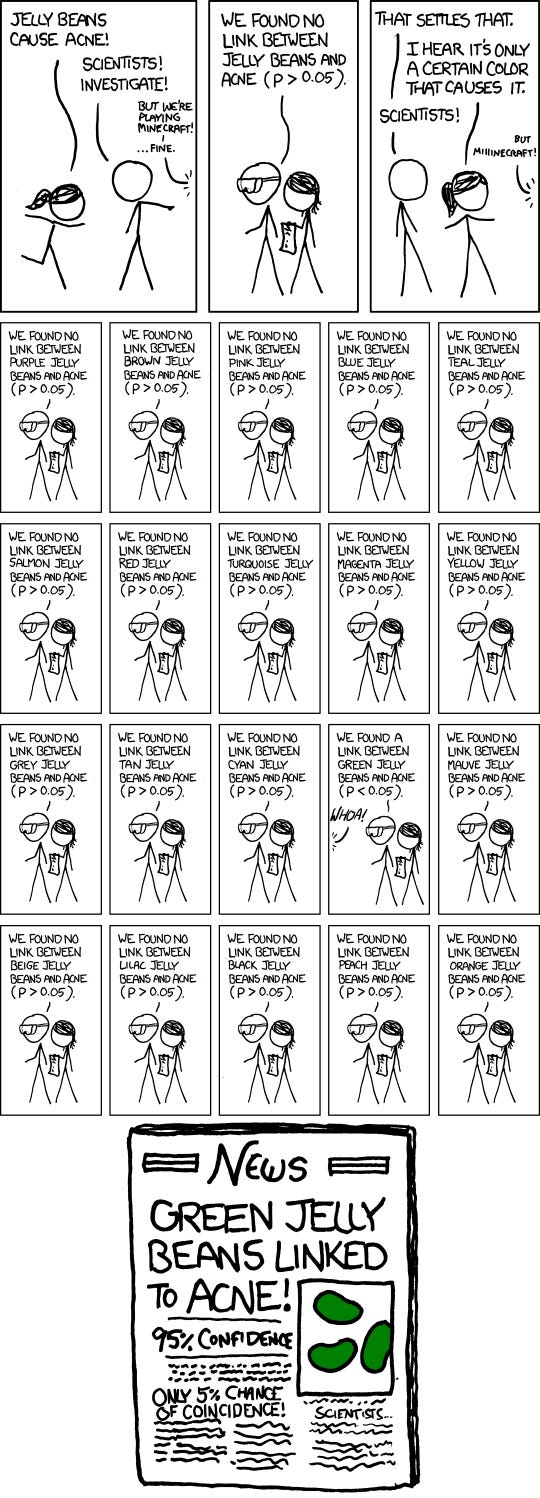

I should mention that these kinds of problems happen in science more than you would expect. It’s called the multiple comparisons problem: scientists do an experiment, measure a bunch of different outcomes, and one of these is found to be statistically significant. The scientists did not find anything actually relevant, they just measured a bunch of stuff and got lucky with one of them.

This issue gets even worse when there are multiple scientists working on the same thing (which is usually the case). 20 different scientists set out to investigate whether avocado relieves back pain. 19 of them get negative results, so they file their study in a drawer and no one hears about them. One of the scientists accidentally gets patients to get a little bit better by eating avocado, publishes his results in a prestigious journal, and is interviewed by The New York Times in an article with the headline “AVOCADO CURES BACK PAIN”. You see the problem.

How does science get around this? There are two main ways: replications and meta-analyses. In replications, other scientists perform the same experiment looking to find that avocado cures back pain. They inevitably fail, so they publish their works saying “Hey, maybe we were just lucky the first time around.” Unfortunately, replications don’t get as much publicity as bold, surprising claims, so everyone keeps believing in the power of avocados and there is not much we can do about it.

Meta-analyses are the combination of multiple studies to see if the results are still statistically significant when you pool a bunch of data together. Maybe if you put enough studies side by side you notice that avocados don’t do much for back pain. This is, by the way, how we know that ivermectin does not really work against covid-19 despite a handful of well-designed, well-conducted studies showing it does.

The layperson’s takeaway from all this could be “don’t believe in studies in which scientists measure a bunch of stuff and find one with positive results.” But unfortunately, popular science articles don’t really talk about all the other things that the scientists measured and found no effects for, and, until recently, scientists didn’t even say what they were looking for until the final results were published.

So a better takeaway is, first, scientific journalism sucks, and second, “be skeptical of weird findings based in one study and one study only.” Also, “maybe also be skeptical of findings that may not even be that weird and there may even be more than one good study backing them but that are not supported by robust meta-analyses or are not a consensus among experts of the field or something like this, I don’t know, man, doing science is hard, we are trying our best.” Moreover: “please create good and clear sorting algorithms for your rent contracts tables else your employees will lose time thinking about the multiple comparisons problem in science.”

Thanks to Allan Paladino and Pedro Paiva for reading drafts of this.